Projects

TÜBİTAK 1001 RESEARCH PROJECT (EEEAG-119E254), METU II, Ankara, Turkey (2019-ongoing)

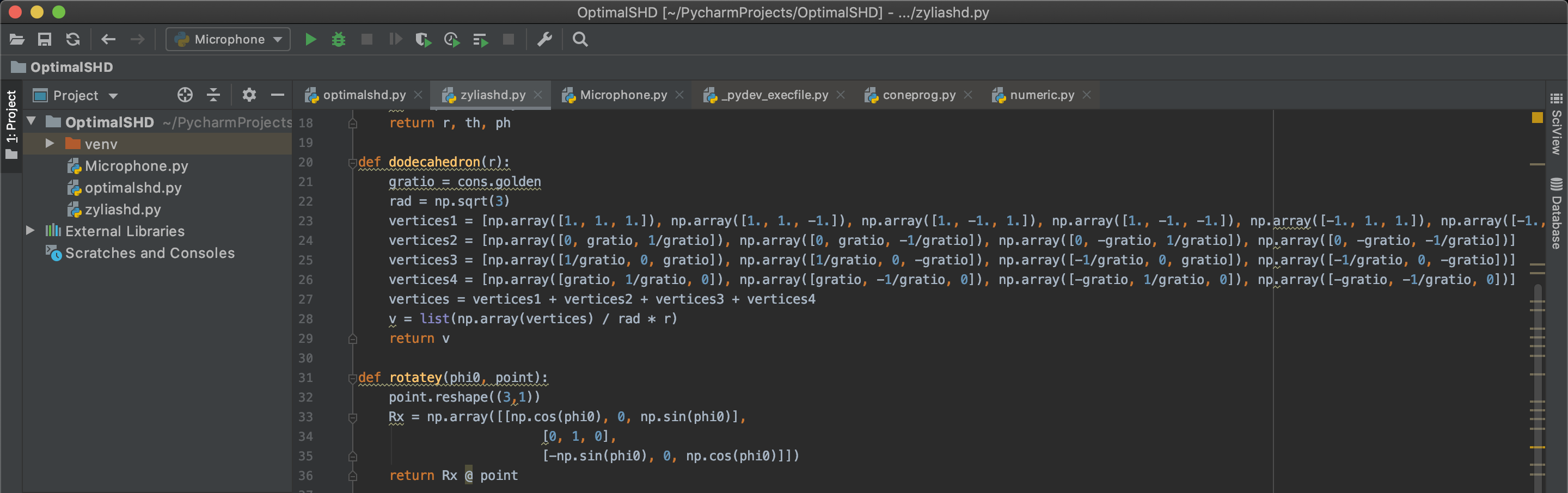

"Audio Signal Processing for Six Degrees of Freedom (6DOF) Immersive Media"

The ISO/IEC MPEG-I standard expected to be finalised in the second half of 2021 concerns the capture, coding, and reproduction of immersive multimedia signals. More specifically, the standard encompasses navigable 360° videos. This type of content allows a user to navigate an immersive video in six degrees of freedom and has applications in cinema, virtual reality, journalism, broadcast Technologies and (following the widespread adoption of 5G networks) in immersive telepresence applications. There exist two components in the capture, coding and reproduction of immersive content: video and audio. The former necessitates the use of Technologies such as light field capture or video capture using omnidirectional cameras. The first commercial examples of such cameras have recently been demonstrated by Facebook recently. Work on the capture and processing of sound fields have very recently started and although some theoretical results have been obtained for interpolating pressure fields fort his purpose, the applicability of such an approach in an actual 6DOF system is limited. This project will investigate techniques for 6DOF sound field capture and processing. The research will concentrate on the development of algorithms for the interpolation of sound fields using rigid spherical microphone array (RSMA) recordings. The overarching aim of the project will be to synthesise the sound field at a location in the recording volume using a limited number of RSMA recordings of the acoustic scene. The techniques to be developed to that aim will include sound field extrapolation using spherical harmonic decompositions and object-based sparse approximations using a dictionary-based representation of the plane wave decomposition of the sound field. The planned research also includes work on transcoding the sound field for interactive binaural reproduction. Specifically, transcoding between interpolated higher-order Ambisonics and binaural audio will be investigated. The theoretical results of the project will be validated using real recordings to be made during the project. The quality of experience afforded by the developed algorithms will be tested using subjective evaluations. The project is planned to have 7 work packages and to have a duration of 36 months. The project will the first in Turkey addressing Part 4 of the upcoming MPEG-I standard and it will run in parallel with the development of such while contributing to it directly or indirectly. Apart from its ambitious dissemination targets, another important contribution of the project will be the training of experts in this emerging field.

TÜBİTAK 1001 RESEARCH PROJECT (EEEAG-113E513), METU II, Ankara, Turkey (2014-2018)

“Spatial audio reproduction using analysis-based synthesis techniques”

This project aims to develop a spatial audio recording, coding, reproduction chain based on the analysis of sound scenes from recordings made with special microphone arrays. The project will investigate novel sound source localisation and separation algorithms and direct/diffuse separation from recordings; develop sound scene authoring tools and necessary methods for 3D and spatial sound source generation.

METU BAP-1 STARTUP GRANT (BAP-08-11-2013-057), METU II, Ankara, Turkey (2013-2015)

“Sound source localisation using open spherical acoustic intensity probes”

This project aims to design and develop open spherical microphone arrays to accurately measure acoustic intensity and use the acoustic intensity vectors for localising sound sources, even under highly reverberant environments.

TUBITAK POSTDOCTORAL RESEARCH FELLOWSHIP (2232)

METU II, Ankara, Turkey (2011-2012) “Design of a scalable acoustic simulator for computer games and virtual reality applications” This project was funded by the Turkish Scientific and Technological Research Council (TUBITAK) under the “2322: Returning Researcher Programme”. The aim of the project is to design a computationally efficient and scalable virtual acoustics simulator that can be used in games and virtual reality applications.

Collaborators: Prof. Veysi İşler, Prof. Zoran Cvetkovic, Dr. Enzo De Sena

EPSRC RESEARCH PROJECT (EP/F001142/1), CDSPR, King’s College London, UK (2008-2011)

“Perceptual Sound Field Reconstruction and Coherent Emulation”

The aim of this project was to design a 5-10 channel audio recording/reproduction system which would enable regenerating an auditory experience that is perceptually equivalent to that of the the recorded environment. This project was funded by the Engineering and Physical Sciences Research Council (EPSRC) of UK.

Collaborators: Prof. Zoran Cvetkovic (PI), Dr. Enzo De Sena (Doctoral student), Prof. Francis Rumsey (Uni. Surrey, now Logophon Ltd.) and Mr. James Johnston

EU FP6 VISNET II NoE, I-Lab, CCSR, University of Surrey, Guildford, UK (2007-2008)

“Networked Audiovisual Media Technologies”

VISNET II was a hugely successful EU FP6 Network of Excellence, which involved many different partners from around Europe. I worked as a postdoctoral research fellow in this project. The research I carried out was mainly related to acoustical modelling of rooms/enclosures with time-domain models run on multiple processing cores.

EPSRC RESEARCH NETWORK (EP/E00685X/1), I-Lab, CCSR, University of Surrey, Guildford, UK, (2006-2009)

“Noise Futures”

This was a research network funded by EPSRC. The members of the network included prominent UK researchers in the areas of acoustics, soundscape analysis and design, architecture, mathematics, audio processing, social sciences. The aim of the network was to form a panel of experts which would advise the governmental bodies like Greater London Authority (GLA) or UK Department of Environment, Food and Rural Affairs (DEFRA) on issues related to noise. I was one of the founding members of the network.

EPSRC PORTFOLIO PROJECT (GR/S72320/01), I-Lab, CCSR, University of Surrey, Guildford, UK, (2004-2007)

“Integrated electronics”

This was an umbrella project which had different internal collaborators in the University of Surrey. Different departments which contributed to the project were I-Lab, CCSR, CVSSP, and ATI. The overarching aim was to generate a virtual health assitant called nicknamed the “Guardian Angel”. The project involved many stages from motion capture, 3D modelling, virtual environments, 3D video processing, and 3D audio. I was employed as a postdoctoral research fellow in this project and the research I carried out was on the design and testing of new 3D audio algorithms for use in virtual environments.

Collaborators: Dr. Banu Günel, Dr. Şafak Doğan, Prof. Ahmet Kondoz